The Market Temperature Model

What it measures, how it works, and what 18 years of data says about it

Every week, before I look at any individual chart or trade setup, I check the temperature of the market.

Not the actual temperature obviously, but a model I’ve built that takes a bunch of different market data and boils them down to a single number between 0 and 10. Think of it like a thermometer for the overall market environment.

The short version: A reading of 5 or above is broadly favourable for risk-taking. The 5-6 range is actually the strongest zone empirically. Below 5, proceed with caution. Below 4 is the danger zone - the only temperature range that produces negative average returns. I publish this number at the start of my weekly analysis so you can see the overall environment before we get into individual charts.

The real value isn’t any single reading - it’s having a systematic framework that keeps me grounded in what the data is actually saying, rather than getting swept up in narratives and emotions. I still use it alongside chart analysis and judgment - it just forms part of my process, not the whole of it.

If you just want to know what the number means each week, check out the “At a glance - how to read the temperature” section below.

How direction affects the reading

The model doesn’t just look at the number - it also tracks whether the temperature is rising, falling, or roughly flat compared to last week. This matters because a temperature of 4.5 that’s been rising from 3.5 tells a very different story than a 4.5 that’s falling from 5.5. The first is moving out of the danger zone. The second is heading toward it.

At two zones (4-5 and 6-7), direction matters enough that it changes the zone label itself. A reading of 4.5 that’s rising is called “Improving” - conditions are strengthening, worth testing the waters. The same 4.5 that’s sideways or falling is called “Cautious” - mixed or weakening. Similarly, a 6.5 that’s rising is “Strong” while a 6.5 that’s flat or falling is “Stable.”

At a glance - how to read the temperature:

7+ / Surge - Rare and exceptionally favourable - aggressive positioning, especially when coming out of a crash or big bear market.

6-7 Rising / Strong - Favourable and accelerating - aggressive positioning.

6-7 Sideways or Falling / Stable - Constructive but not accelerating - maintain exposure.

5-6 / Goldilocks Zone - The strongest zone empirically - normal to aggressive positioning, especially when rising.

4-5 Rising / Improving - Conditions strengthening - testing the waters, selective and not full size yet.

4-5 Sideways or Falling / Cautious - Mixed or weakening - very selective, tighten stops.

3-4 / High Risk - The true danger zone - very limited or no exposure, possibly some shorts.

0-3 / Extreme Stress - Rare. Capital preservation mode - no exposure, but watch for possible contrarian bounces.

The zone matters more than the precise decimal. And single-week readings in the middle zones should be treated with healthy skepticism until they persist - a reading that sustains for two or three weeks points to a genuine regime. The extremes are a bit different - a Surge reading coming out of a crash can be meaningful even if it only lasts a week or two, as we’ll see below.

Context matters at the extremes. A Surge reading coming out of a bear market or crash - like the 2020 COVID recovery - typically signals a genuine all-clear, because it means conditions are very good, while fear is still elevated. That’s a powerful combination. A Surge reading at the end of a long, low-volatility uptrend - like early 2018 - can signal something closer to a blow-off top.

So how does it work?

The model looks at 12 indicators across four pillars. Each pillar captures a different dimension of market health, and I weight them based on how much information I think they carry.

The idea isn’t original. Hedge funds and institutional research shops have been building composite condition indicators like this for decades. However, I wanted something I could build myself, understand completely, tune to my own trading style, and share the readings with you each week.

The first pillar is what I call Tape and Trend. This gets the most weight - 40% of the total score - because price and participation are the most direct measures of what the market is actually doing.

In here I’ve got the trend structure of SPY and QQQ (are they in confirmed uptrends, downtrends, or something messy in between?), a couple of breadth indicators showing how many stocks are actually participating in the move, and a momentum reading that tells me whether the market’s push is accelerating or fading.

When most stocks are going up and the trend is healthy, this pillar scores high. When breadth starts declining and the trend is breaking down, it scores low.

The second pillar is Sentiment at 20%. This one is interesting because it works as a contrarian signal. I use the VIX here - without going into exactly how it’s scored, the key insight is that very low VIX readings tend to signal complacency (slightly bearish), while very high VIX readings tend to signal the kind of extreme fear that historically precedes recoveries (slightly bullish). This isn’t a timing tool - it’s a condition gauge.

The third pillar is Monetary and Macro at 20%. This captures the financial plumbing - credit stress, bond market volatility, and US dollar strength.

When credit spreads are tight, bond volatility is low, and the dollar is stable or declining, it’s a friendlier environment for equities. When these start flashing warning signs, the environment can get more challenging. These are the kind of signals that can deteriorate well before the stock market reacts, which is what makes them valuable.

The fourth pillar is Regime at 20%. This is about what’s happening under the surface - are investors rotating into growth sectors or defensive ones? Is the market rewarding risk-taking or punishing it?

These internal rotation patterns often shift before the headline index does, so they serve as early warning indicators.

All 12 indicators score on a scale from -1 (most bearish) to +1 (most bullish). Within each pillar, the individual scores get averaged, then mapped to the pillar’s point allocation. The four pillars add up to give the 0-10 temperature reading.

The output looks something like this: “Market Temperature: 6.2 / 10 (Stable).” I publish this at the start of my weekly analysis so you can see the overall environment before we get into individual charts.

A few things this model is not.

It’s not a trading signal. A high temperature doesn’t mean “buy everything” and a low temperature doesn’t mean “sell everything.” It’s a condition gauge that informs position sizing and how aggressive or defensive I want to be.

If the temperature is 7+ (Surge), I’m more willing to hold positions through pullbacks and add to winners. Below 5, I’m tightening stops and reducing size - with how aggressively depending on whether conditions are improving or clearly deteriorating. At 3 or below (Extreme Stress territory), I’m in capital preservation mode.

It’s not a crystal ball. Statistically, this week’s temperature tells you almost nothing about what SPY will do next week - the forward correlation is essentially zero. The temperature and SPY’s weekly performance tend to rise and fall together in real time (concurrent correlation of 0.37), but the model isn’t forecasting the future.

That said, the components that drive the temperature - breadth, sector rotation, credit conditions - tend to change direction before headline price does at major turning points. That’s why the temperature peaked before SPY at every major top in the 10-year in-sample period (2016-2026). The model doesn’t predict the market, but it does give you an early read on whether the environment is shifting - and I use that to adjust my positioning.

It’s not perfect. No model is. It uses historical data to calibrate what “normal” looks like, and markets can behave in ways that break historical patterns. I treat it as one input alongside chart analysis, not as the final word.

The model was designed before it was tested.

I want to flag this because I think it matters. The model architecture - the four pillars, the 12 indicators, the weightings, the scoring logic - was designed and frozen before the full backtests were run. Several indicators were actually removed during the design process because they turned out to be noise or even wrong-signed (meaning they were actively making the model worse). I’d rather have 12 indicators that work than 17 that include dead weight.

Two backtests were then run in sequence. First, a 10-year in-sample backtest (2016-2026) to validate the model against the period it was designed around. Then, an 8+ year out-of-sample backtest (2007-2016) to test whether the model held up on data it had never seen. In both cases, I didn’t go back and tweak weights or swap indicators to make the numbers look better. The findings you’ll see below were all discovered in those backtests, not targeted by them.

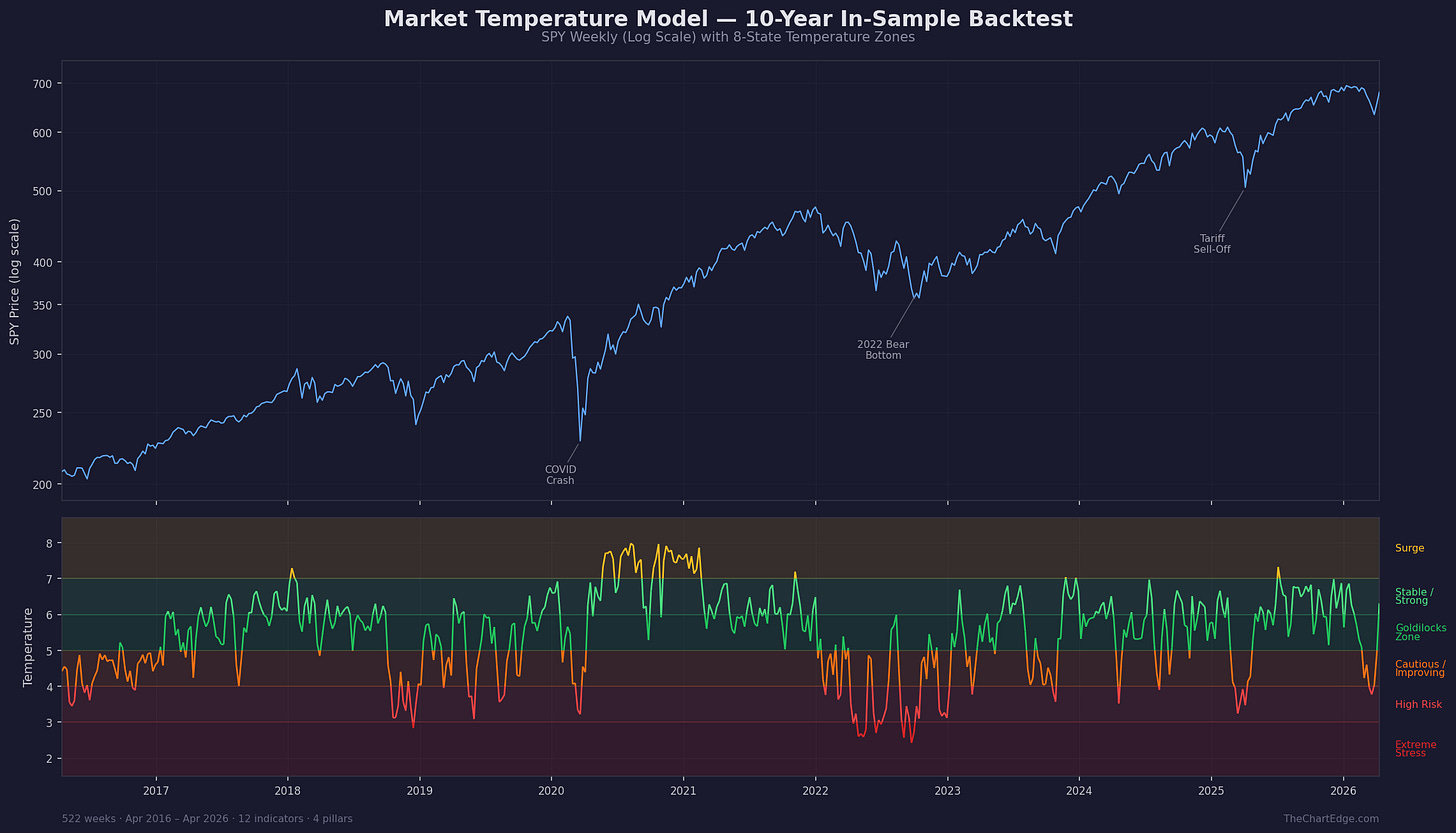

What the 10-year in-sample backtest showed (2016-2026)

The model has been through a rigorous empirical audit against 10 years of weekly data - 522 weeks from April 2016 through April 2026, covering the 2018 corrections, the COVID crash and recovery, the 2022 bear, the 2025 tariff selloff, and the March 2026 pullback. All 522 weekly readings were independently cross-validated by a second system to make sure the calculations are correct.

The first finding is where I think the model earns its keep: giving you a heads-up when conditions are changing beneath the surface, before that change shows up in headline price.

At market tops, breadth typically narrows and sector leadership rotates weeks before the index itself rolls over. The temperature picks that up. Across the six major SPY drawdowns of 8% or more in the 10-year sample, the temperature peaked before SPY peaked in every case. In four of the six, the lead time was around 2-4 weeks. In two cases (the 2022 bear and the 2026 pullback), it was closer to 8 weeks.

A few concrete examples. Before the COVID crash, the temperature peaked at 6.92 on January 17, 2020. It first dropped below 5.0 on January 31 - nearly three weeks before SPY peaked on February 19. It briefly bounced back above 5.0 for a couple of weeks, but by February 28 it was below 5.0 for good and stayed there through the crash.

Before the 2025 tariff selloff, the temperature peaked at 6.66 on January 24, 2025. SPY peaked on February 19, nearly four weeks later. By February 28, the temperature was already below 5.

Going into the pullback we’ve just come through (March 2026), the temperature peaked at 6.98 on December 5, 2025 - nearly eight weeks before SPY made its closing high on January 27, 2026.

The pattern at bottoms is similar but usually with less lead time. During the 2022 bear, the temperature hit its absolute low of 2.42 (Extreme Stress) on September 23. SPY didn’t reach its low until October 13 - about three weeks later. By that point, the temperature had already climbed back to the 3.0-3.5 range. The model’s internals had started stabilising while price was still making new lows.

The 2025 tariff bottom showed a similar pattern but messier. The temperature hit its low of 3.24 on March 14 with SPY at 562, but SPY continued falling to around 482 (intraday) on April 7 - three weeks later. Even at the SPY bottom, the temperature held above its own low (just). But it wasn’t a clean, steady improvement - the temperature wobbled back toward its low that week rather than rising smoothly.

These are small samples and I’d caveat heavily against treating them as general rules. But they’re consistent with what the model is designed to measure.

The other finding is about conditions versus returns.

Over the 10-year sample, a strategy that held SPY only when the temperature was 5.0 or higher would have captured about 90% of SPY’s total return while only being invested 68% of the time. Maximum drawdown was cut from -32% to -14%.

During the weeks the model said conditions were favourable (5.0 or higher), the environment was genuinely better. The Sharpe ratio for those invested weeks was 1.32 vs 0.78 for buy-and-hold. The Sortino ratio - which focuses on downside volatility, which I actually find more meaningful since upside volatility isn’t what hurts you - was 1.95 vs 1.12. Even on the most conservative basis, treating out-of-market weeks as earning zero (when in practice you’d be earning something on your cash), the Sharpe ratio still improved to 1.09 and the Sortino to 1.61.

I’m not suggesting anyone use this as a mechanical in-out trading system - that’s not how I use it, and past performance is past performance. But the numbers are what convinced me that the model is worth taking seriously, and worth sharing publicly.

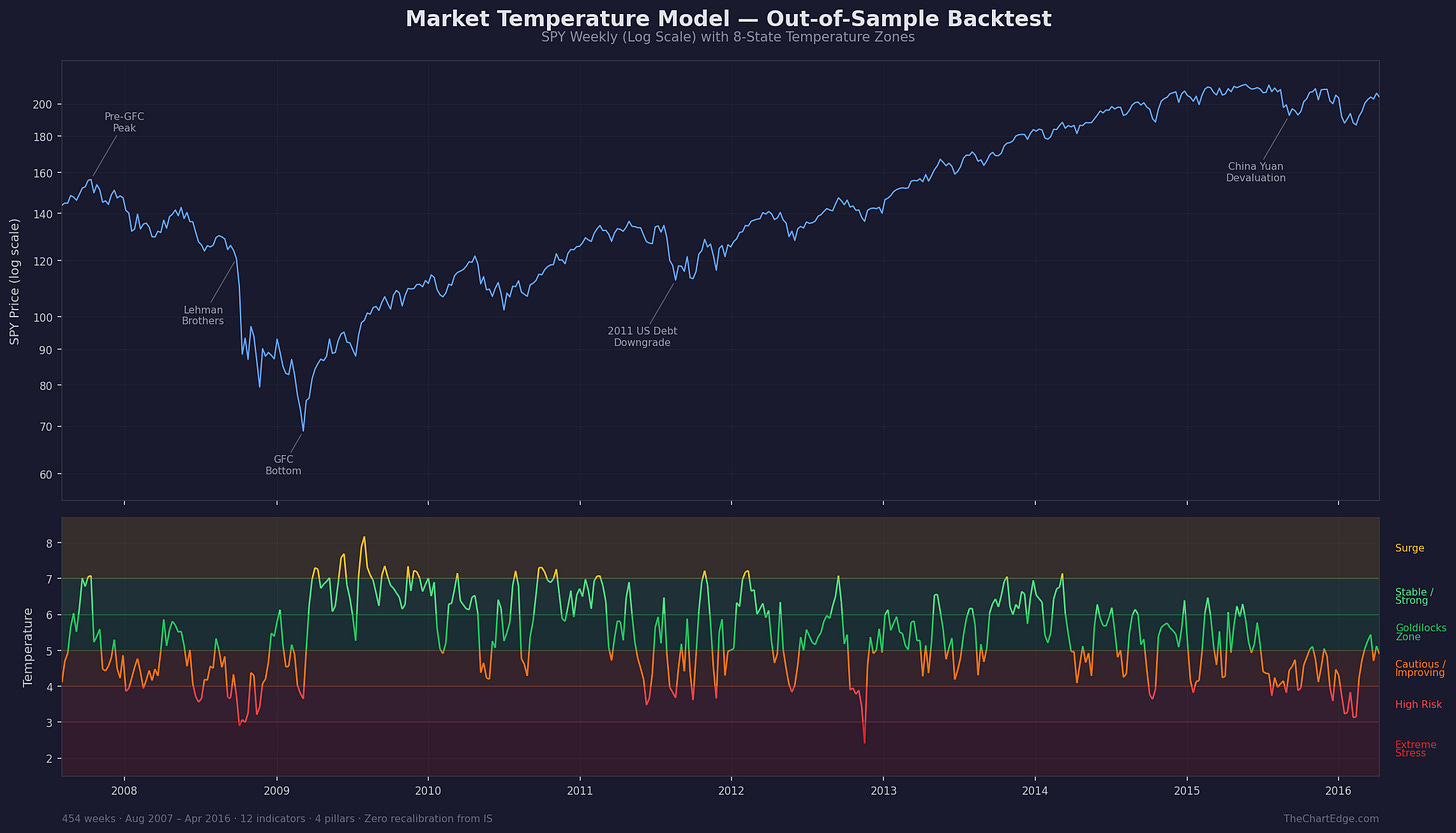

Does it hold up on data it was never built on?

A natural concern with any backtest is overfitting.

To test this, I ran the model on a separate period it was never designed on: August 2007 through April 2016 - over 450 weeks that include the Global Financial Crisis, the 2010 Flash Crash, the 2011 US debt downgrade, the European debt crisis, and the 2015 China Yuan devaluation. Same weights, same scoring logic, same thresholds. One indicator wasn’t available for the first few years of the earlier period, so its pillar ran on two inputs instead of three until the data became available - but no substitutions or adjustments were made.

The results held up. The concurrent correlation between the temperature and SPY’s weekly performance was virtually identical across both periods (0.380 out-of-sample vs 0.373 in-sample). The Goldilocks Zone (5-6) produced almost the same risk-adjusted returns out-of-sample as in-sample.

The model correctly dropped below 5.0 during the GFC and stayed there for most of the bear market. And on the exact week the market bottomed in March 2009 - after a 56% drawdown - the temperature jumped from High Risk (3.6) straight to Goldilocks (5.0). This was the eighth time during the bear that the model had crossed back above 5.0. The first seven lasted 1-8 weeks and all failed. The eighth one sustained for 47 weeks and reached Surge.

That persistence pattern is the key practical insight. Short-lived crossings above 5.0 during a bear market are traps. The genuine recovery sustains.

At major tops, the temperature peaked before SPY in five of six drawdowns - the only exception being the very start of the GFC in October 2007, where they peaked in the same week.

Across the combined 18 years and all twelve major drawdowns, the temperature peaked before SPY’s price peak in eleven of twelve cases.

Not everything generalised perfectly - a couple of individual indicators carried weaker signals in the earlier period, and the model’s behaviour when the temperature was above 5.0 but falling was more dangerous in the GFC era. Both of those findings improved my understanding of where the model is strongest and where I need to apply extra judgment. But the core mechanism - breadth, momentum, and trend as condition indicators - proved genuinely robust across two very different market regimes.

What about head fakes?

Across both the 10-year in-sample period (2016-2026) and the 8+ year out-of-sample period (2007-2016), the model dropped below 5.0 during every major drawdown.

During the 2022 bear, the model crossed above 5.0 on five separate bear market rallies - none lasted more than three weeks before the temperature dropped back.

The GFC was messier - which you’d expect from a 56% drawdown. There were seven false crossings above 5.0 during bear market rallies. Five of those lasted just one or two weeks - similar to the 2022 pattern. But two lasted longer (five and eight weeks), which is the kind of extended head fake you’d expect during a more severe, drawn-out bear. Even in that extreme, the model always dropped back below 5.0 before the worst damage hit.

So it can give brief readings above 5.0 during a bear market rally that don’t stick. But it doesn’t give a sustained all-clear while the market is falling apart. That distinction matters.

What about head fakes in the other direction? Yes, occasionally. The temperature can drop below 4.0 (into High Risk) and the market then absorbs the shock and recovers without a major drawdown.

A useful rule came out of the in-sample analysis: if the temperature drops below 4.0 and is still there two weeks later, it’s a genuine drawdown warning about 91% of the time. If it bounces back above 4.0 on the next weekly reading, the market usually shakes it off.

The out-of-sample period showed a similar pattern, though the discrimination was somewhat weaker during the GFC because even genuine warnings sometimes bounced briefly before conditions deteriorated further.

The broader principle holds across both periods: a reading that sustains for two or three weeks tells you far more than any single week’s number.

Following along

I’ll be sharing the weekly temperature reading as a regular feature going forward. Over time, you’ll get a feel for what the different levels mean and how they relate to actual market conditions. That in itself is valuable information.

Beyond the weekly readings, I’ll also be publishing deeper dives here on TheChartEdge - breakdowns of specific indicators, case studies from real trade decisions, and explorations of how the model behaves in different market regimes.

If you want the weekly readings and the thinking behind them, subscribe - it’s free.

As always, I am open to all possibilities. Stay open minded and manage risk tightly.

Cheers!

Marcus Grant, CFTe

Disclaimer: The content provided in this newsletter is for informational and educational purposes only and should not be considered as financial, investment, or legal advice. The information shared is based on our research and analysis, but we are not a licensed financial advisor, nor can we guarantee its accuracy, completeness, or timeliness. Market conditions and financial instruments can change rapidly, and any opinions expressed may not be suitable for all investors. Any opinions expressed and or securities mentioned do not constitute a recommendation to buy, sell, or hold that or any other security. You should conduct your own due diligence and consult with a licensed financial advisor or other professional before making any investment decisions. Past performance is not indicative of future results, and all investments carry the risk of loss. The authors and publishers of this newsletter are not responsible for any financial decisions made based on the content provided herein. By reading and or subscribing to this newsletter, you acknowledge and agree that you are using the information at your own risk.